Autoditerima.ai

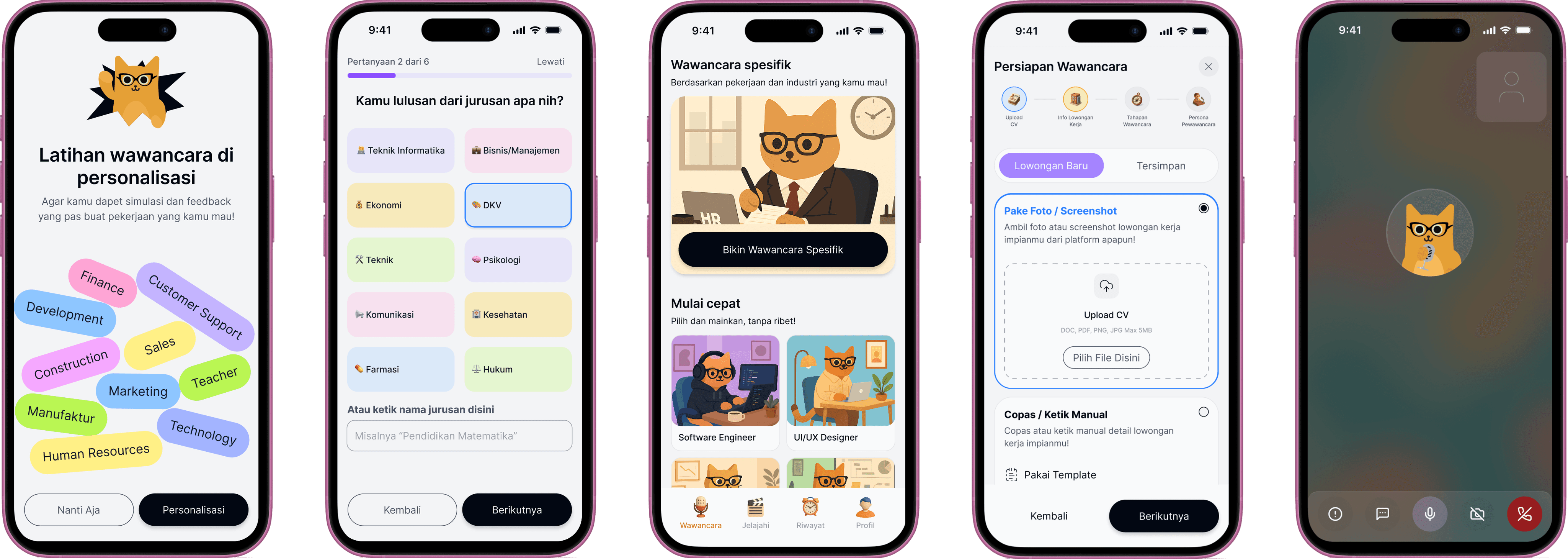

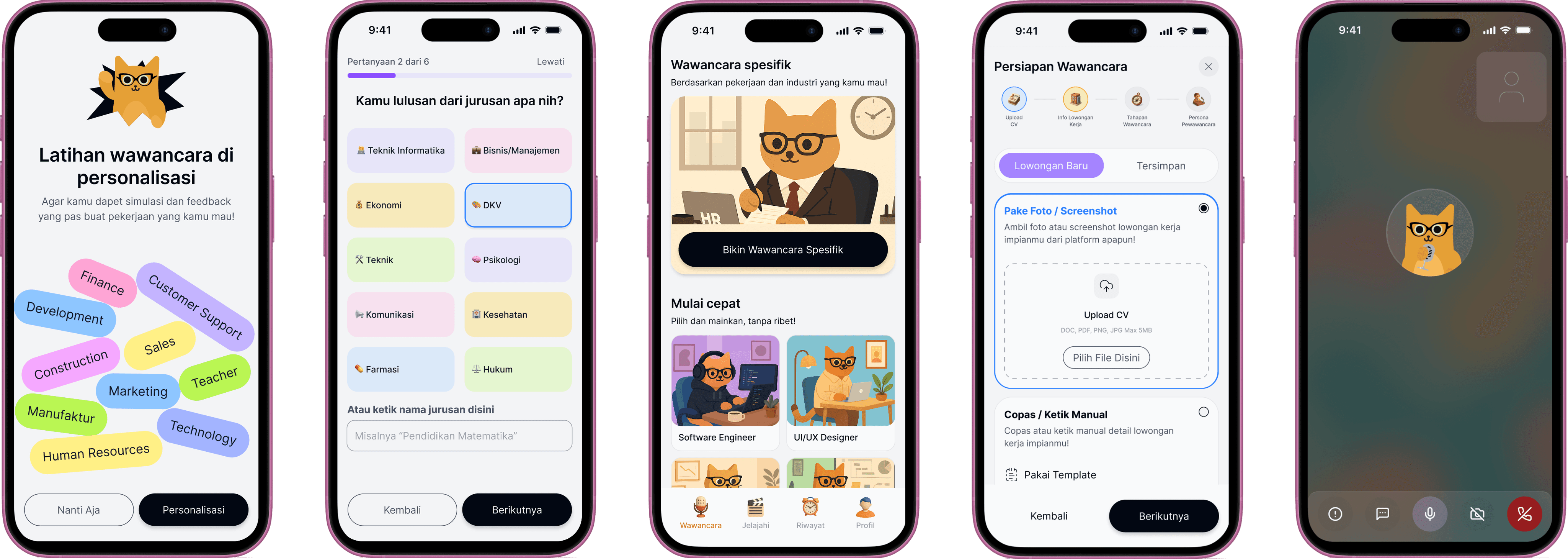

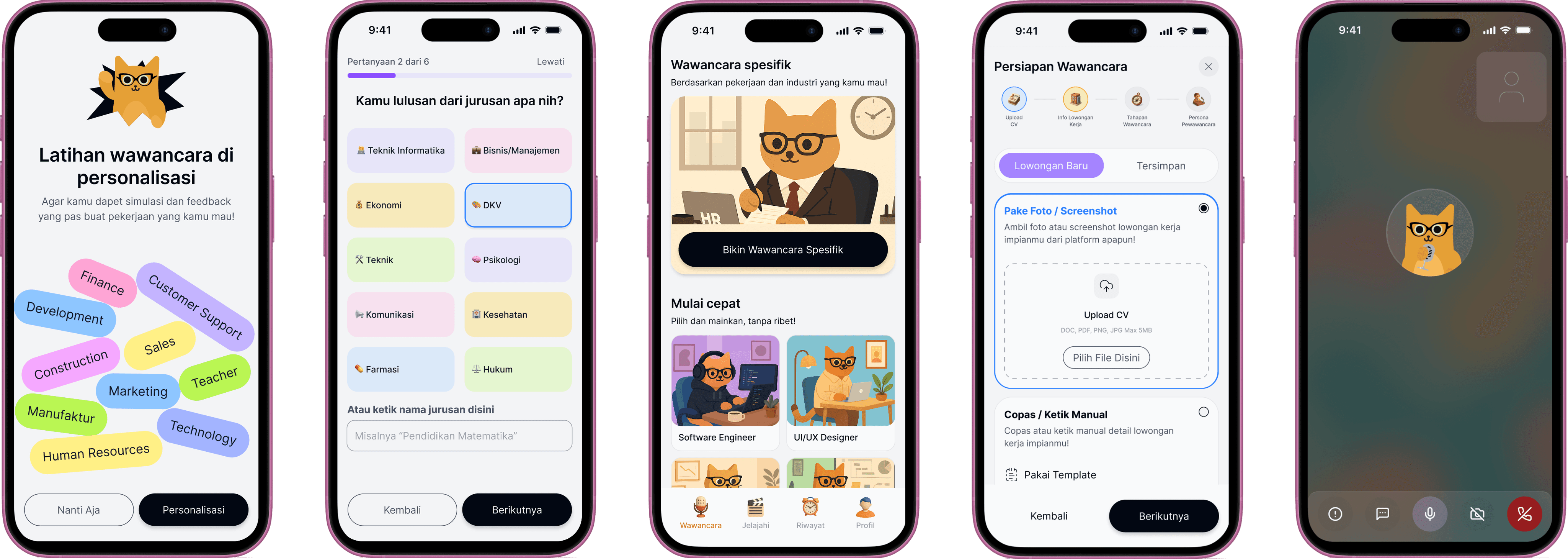

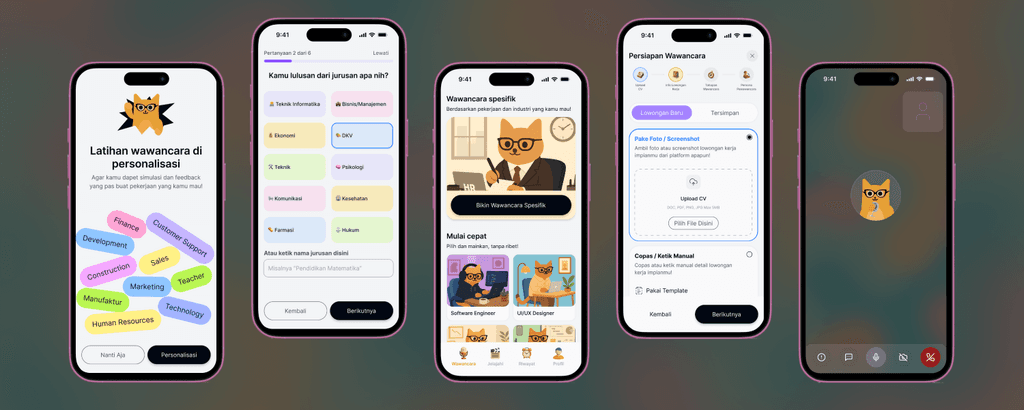

autoditerima.ai is an AI-based job interview practice platform designed specifically for fresh graduates and job seekers in the tech industry. With realistic simulations, data-driven feedback, and self-branding features, autoditerima.ai helps users appear more prepared when facing the job selection process.

My Role

UI/UX Designer

Duration

June 2025 - Present

Team

CEO Wiliam, CTO Feraldo, Fullstack Kevin, Marketing Candy

What did I do?

Research

Visual Design

UX Motion

Prototyping

Ilustrator

Content Planner

AI Product Research

Goals

Understanding the needs and challenges of fresh graduates in facing job interviews

Evaluating the effectiveness of automated feedback in improving user interview readiness

Developing realistic AI-based interview simulations, based on CV and job requirements provided by users

Identifying success metrics to measure user suitability for the intended position

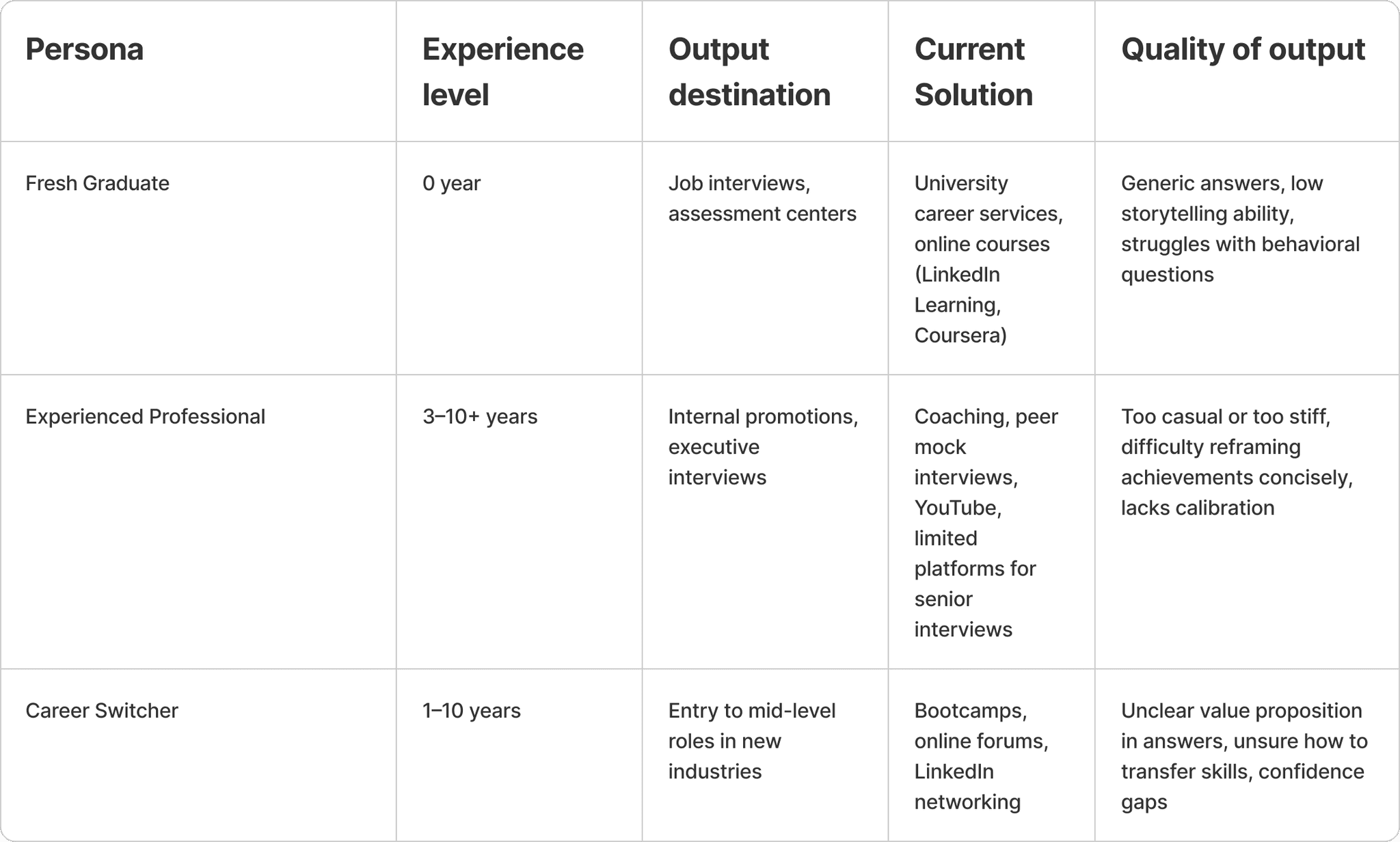

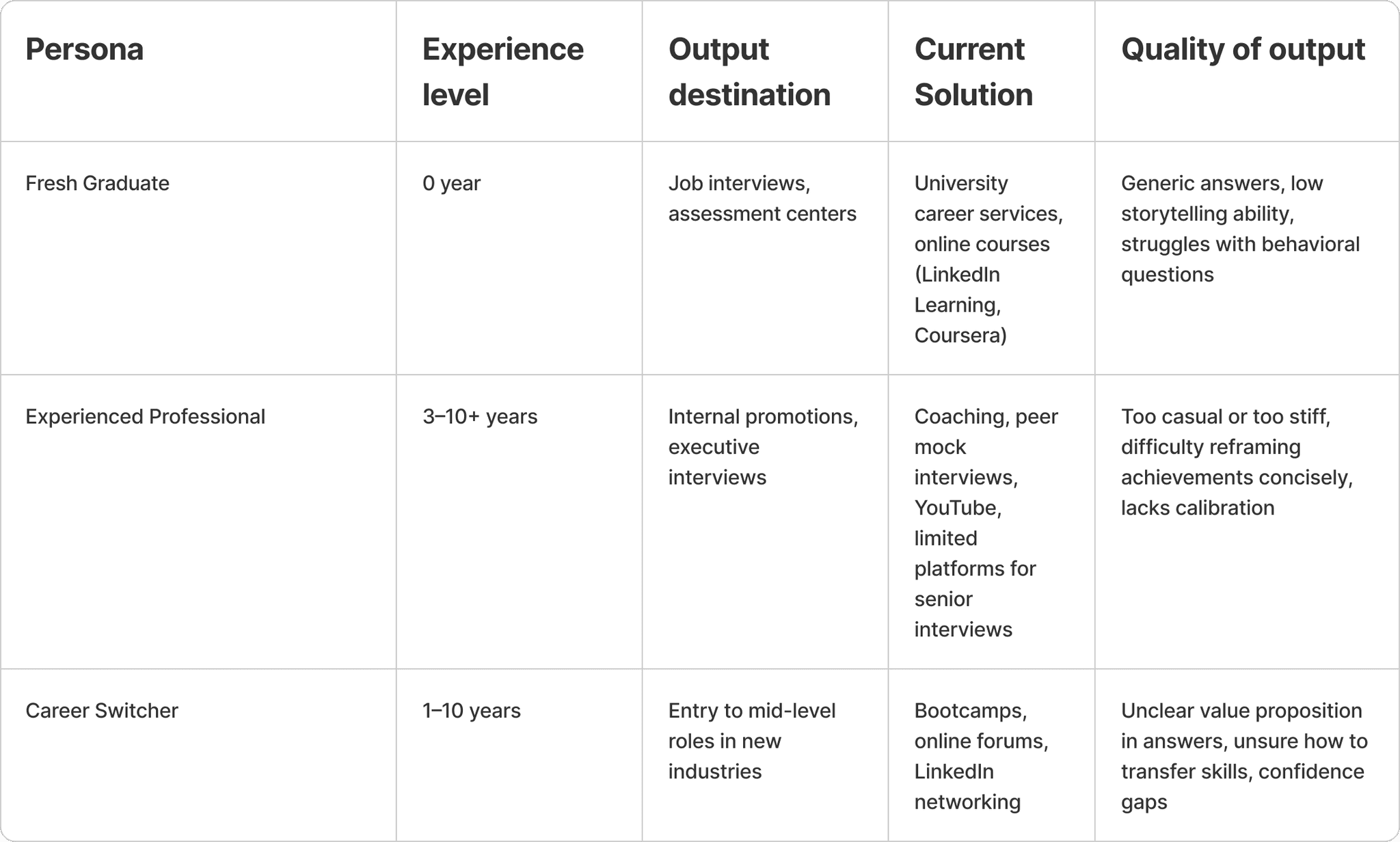

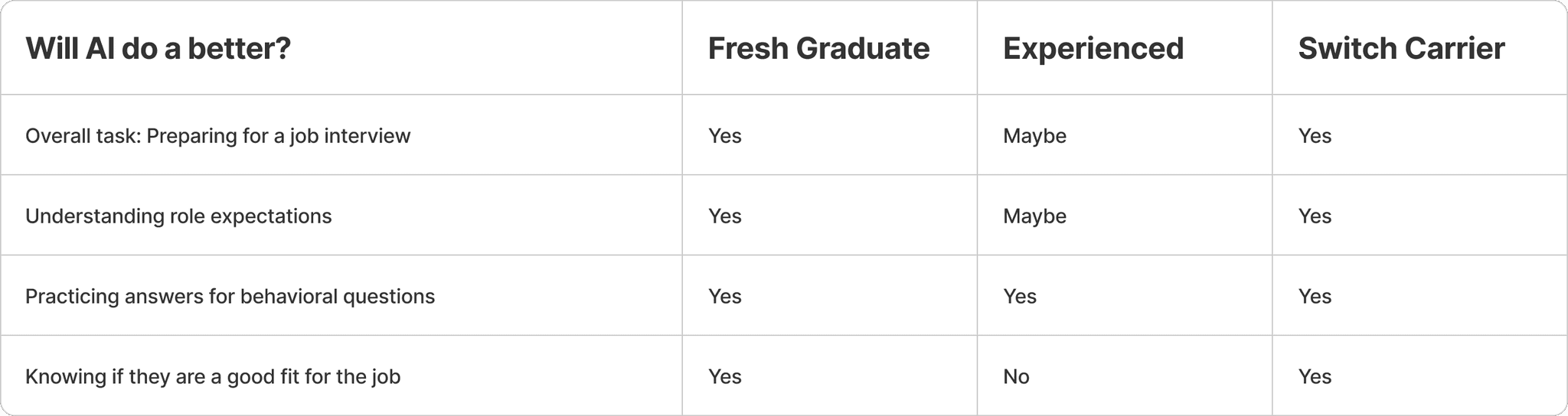

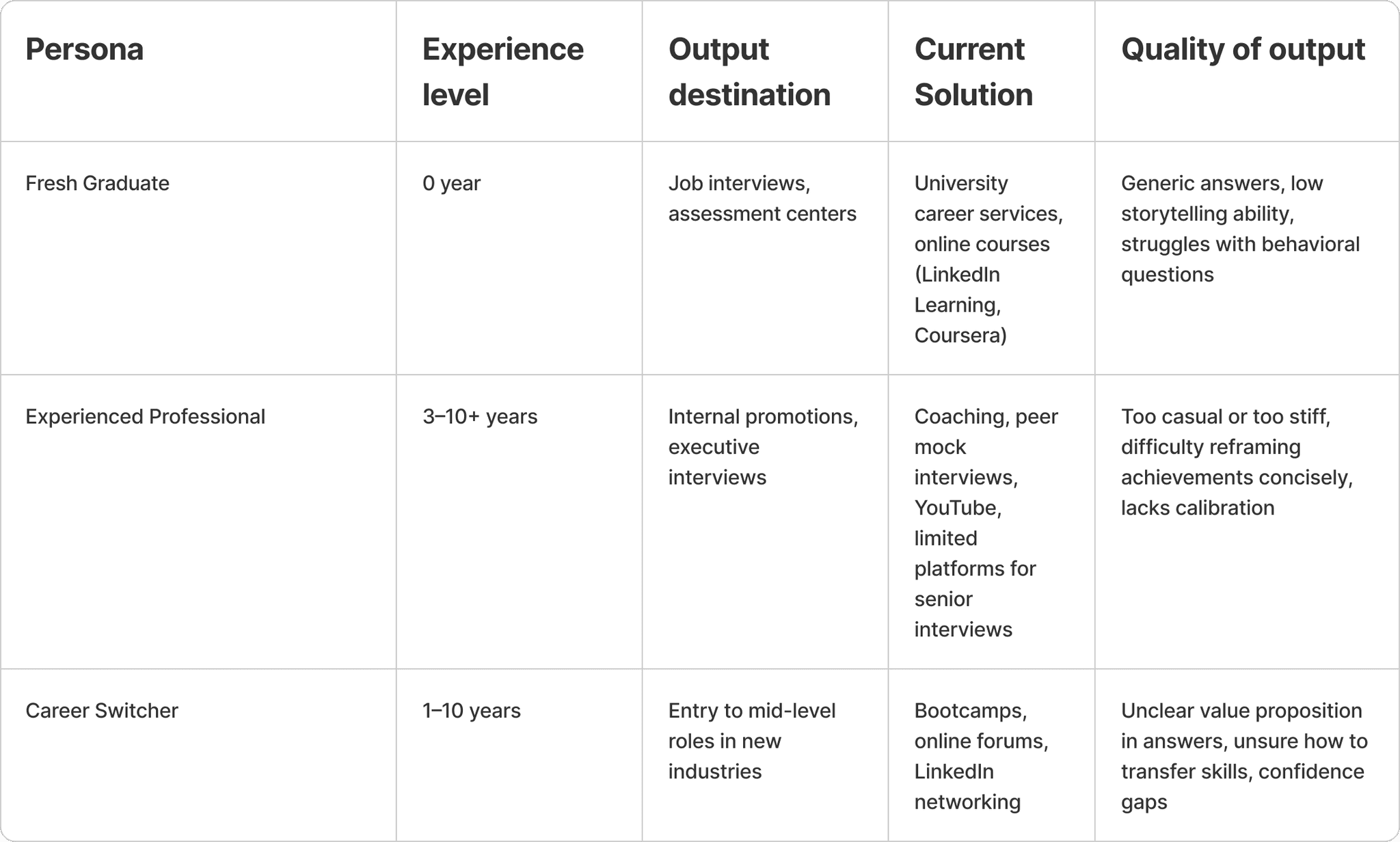

Identify User

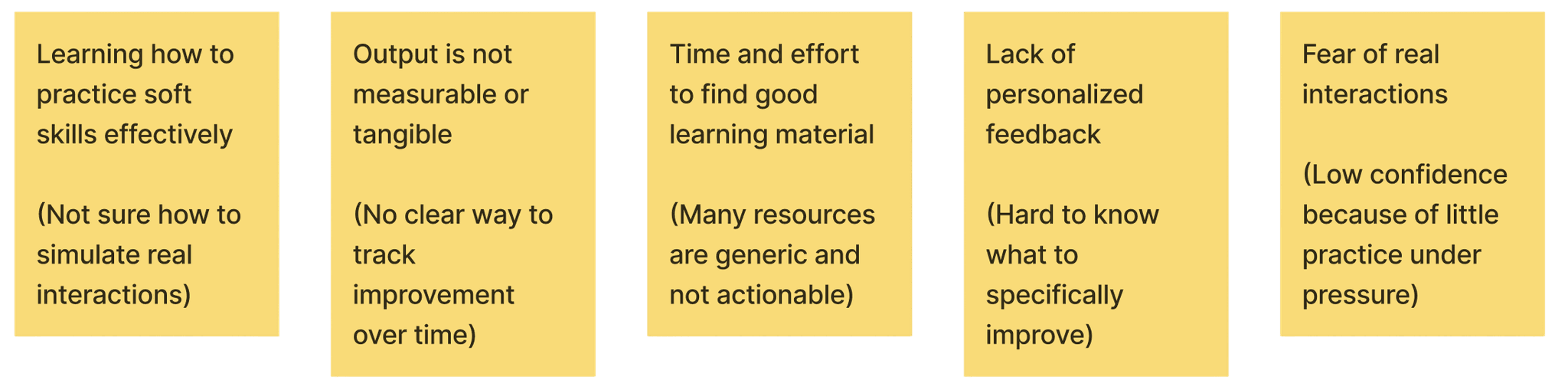

Identify User Problem

Job to be done

Practice soft skills to succeed in real-world interactions

Workflow

Search for online soft skills training (YouTube videos, LinkedIn Learning, Udemy, etc).

Enroll in general courses or webinars.

Passively watch video lectures or read materials.

Try to apply skills in real or simulated settings (e.g., mock interviews, mock sales calls).

Seek feedback manually (from peers, mentors, managers).

Repeat practice individually, without structured feedback loops.

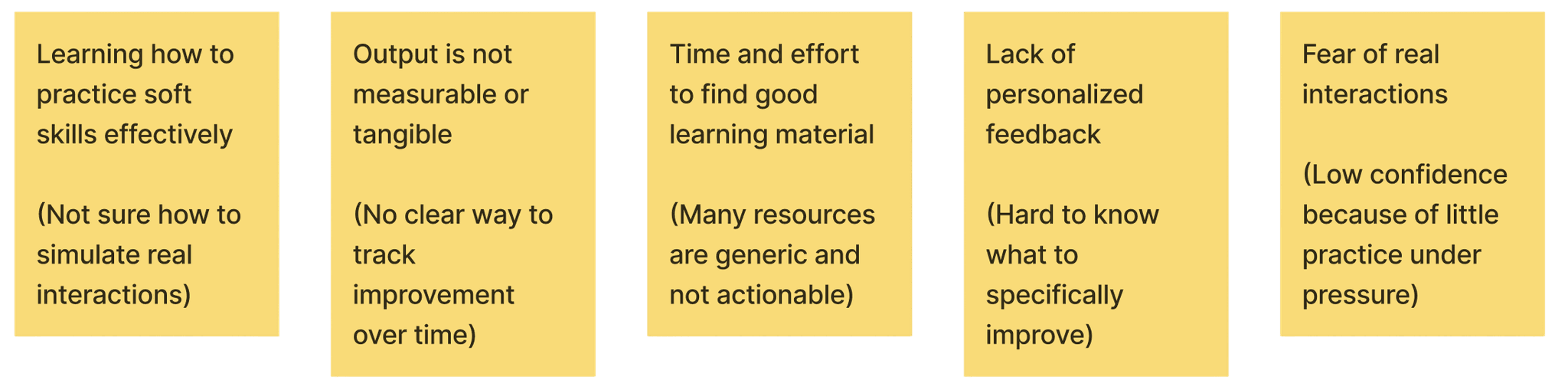

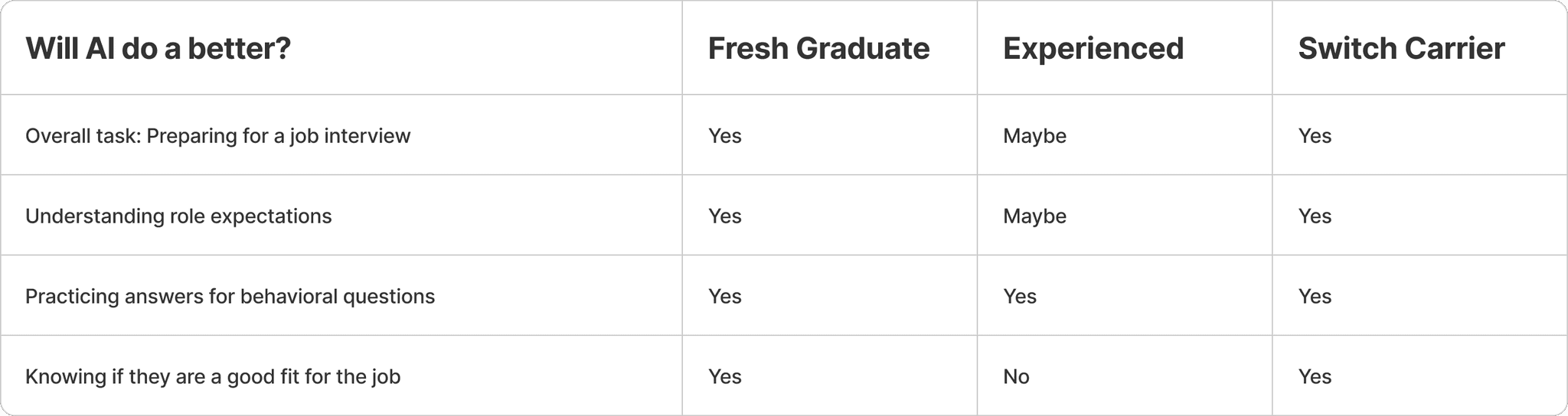

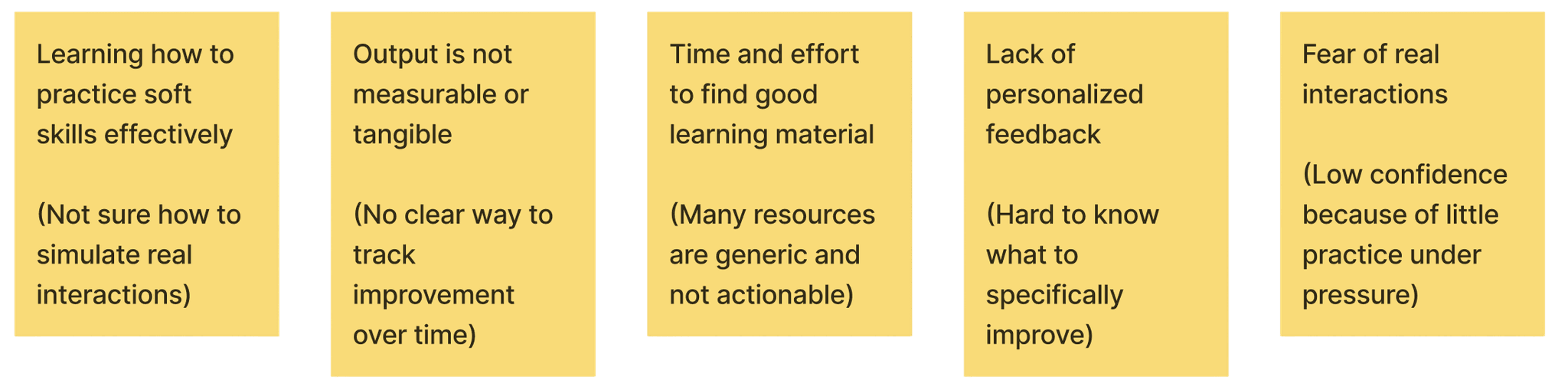

Pain Point

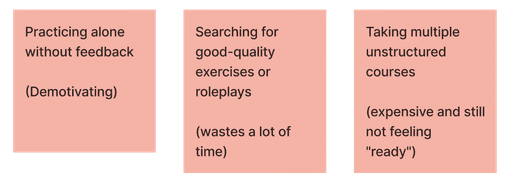

Most effort / time / Money spent on

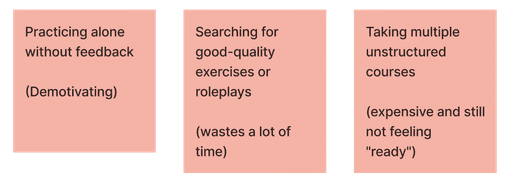

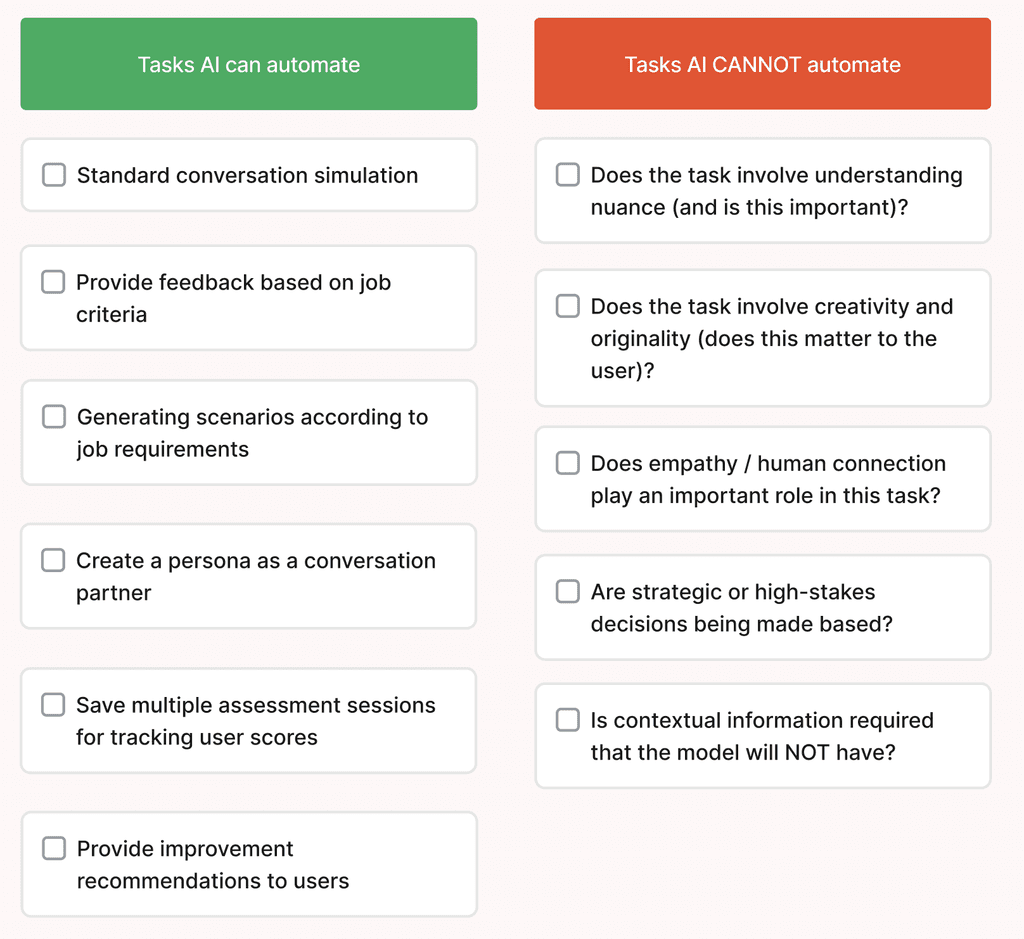

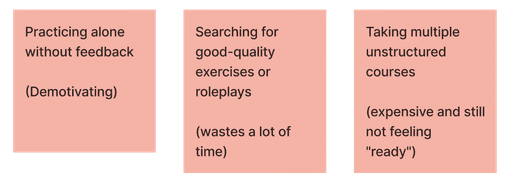

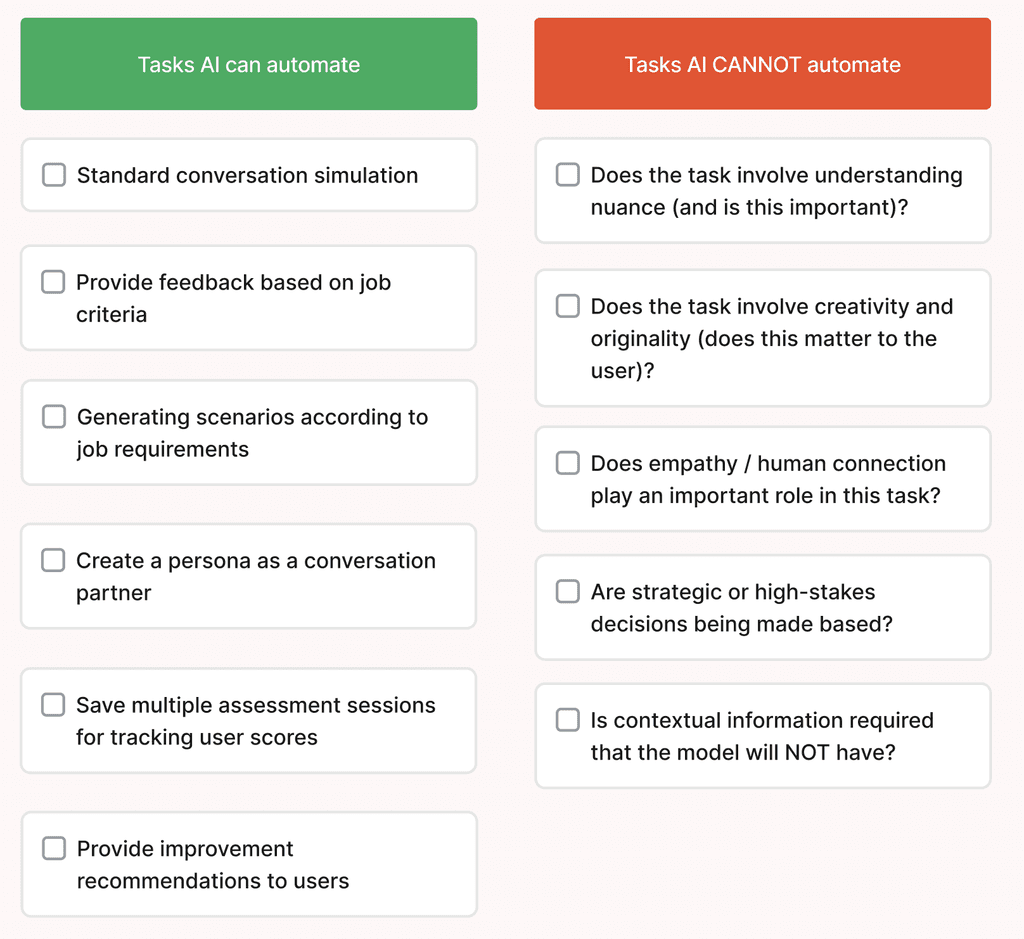

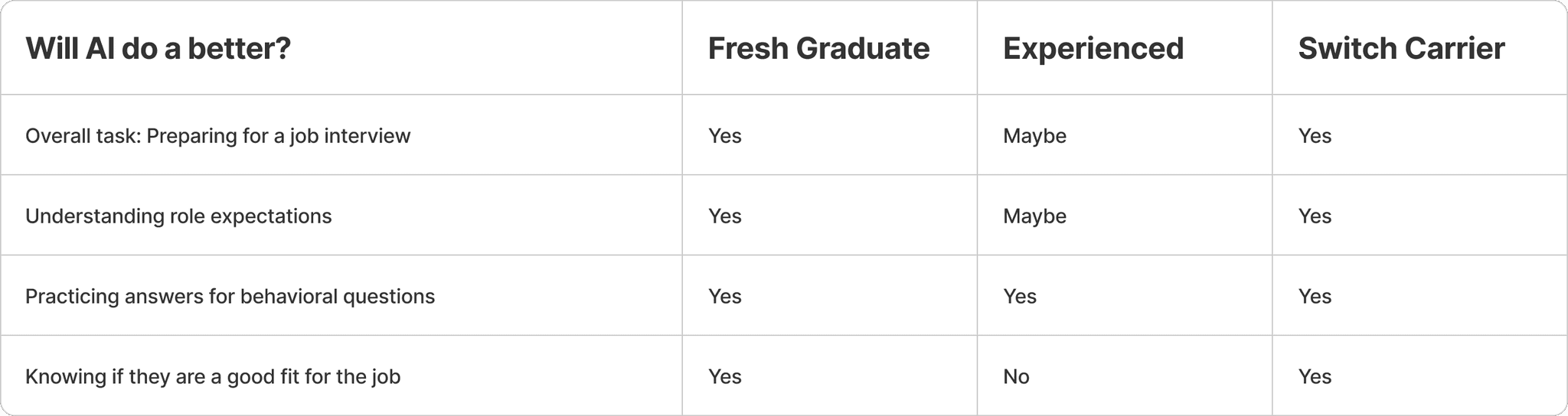

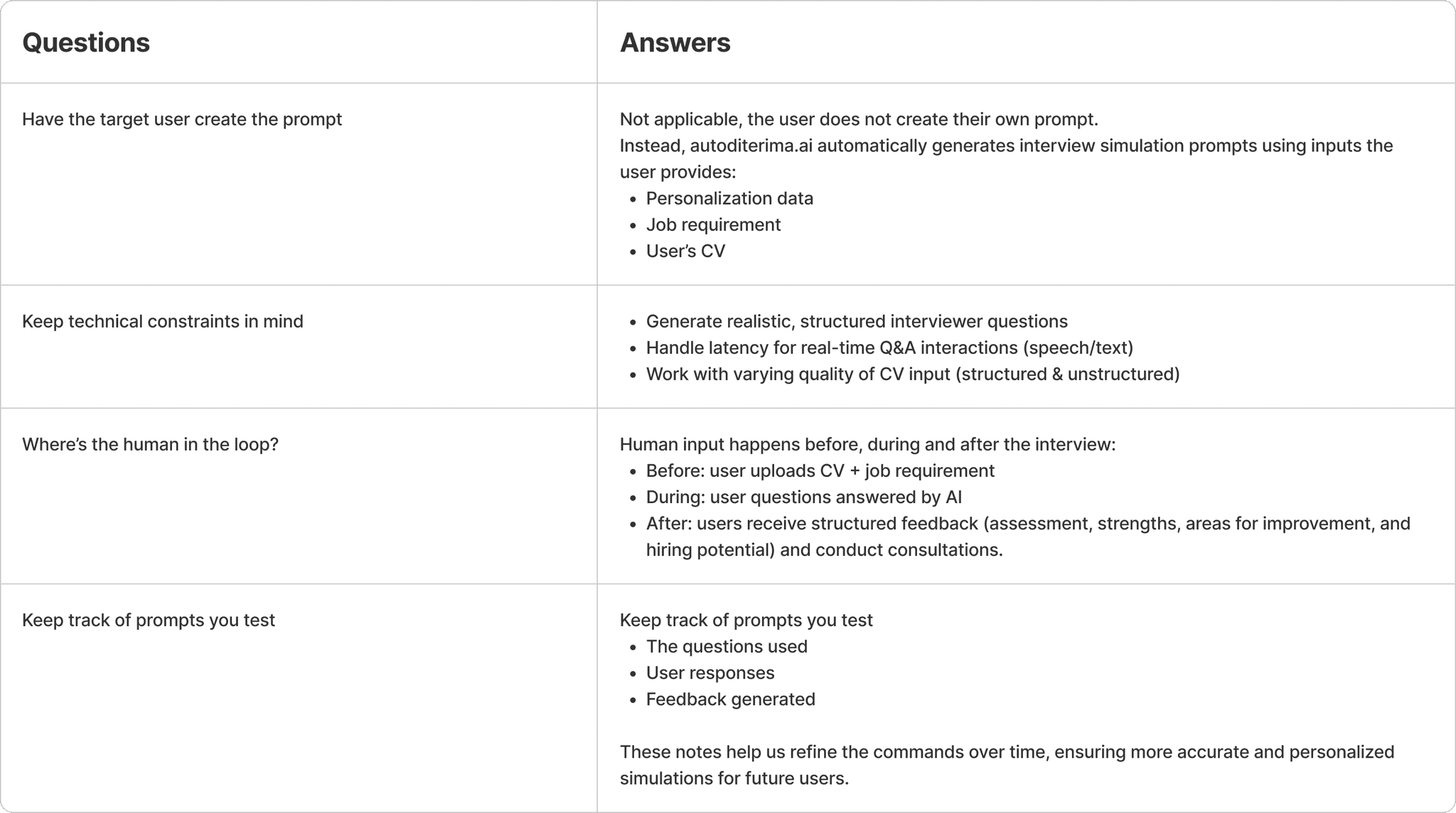

Understand AI’s capabilities as a tool

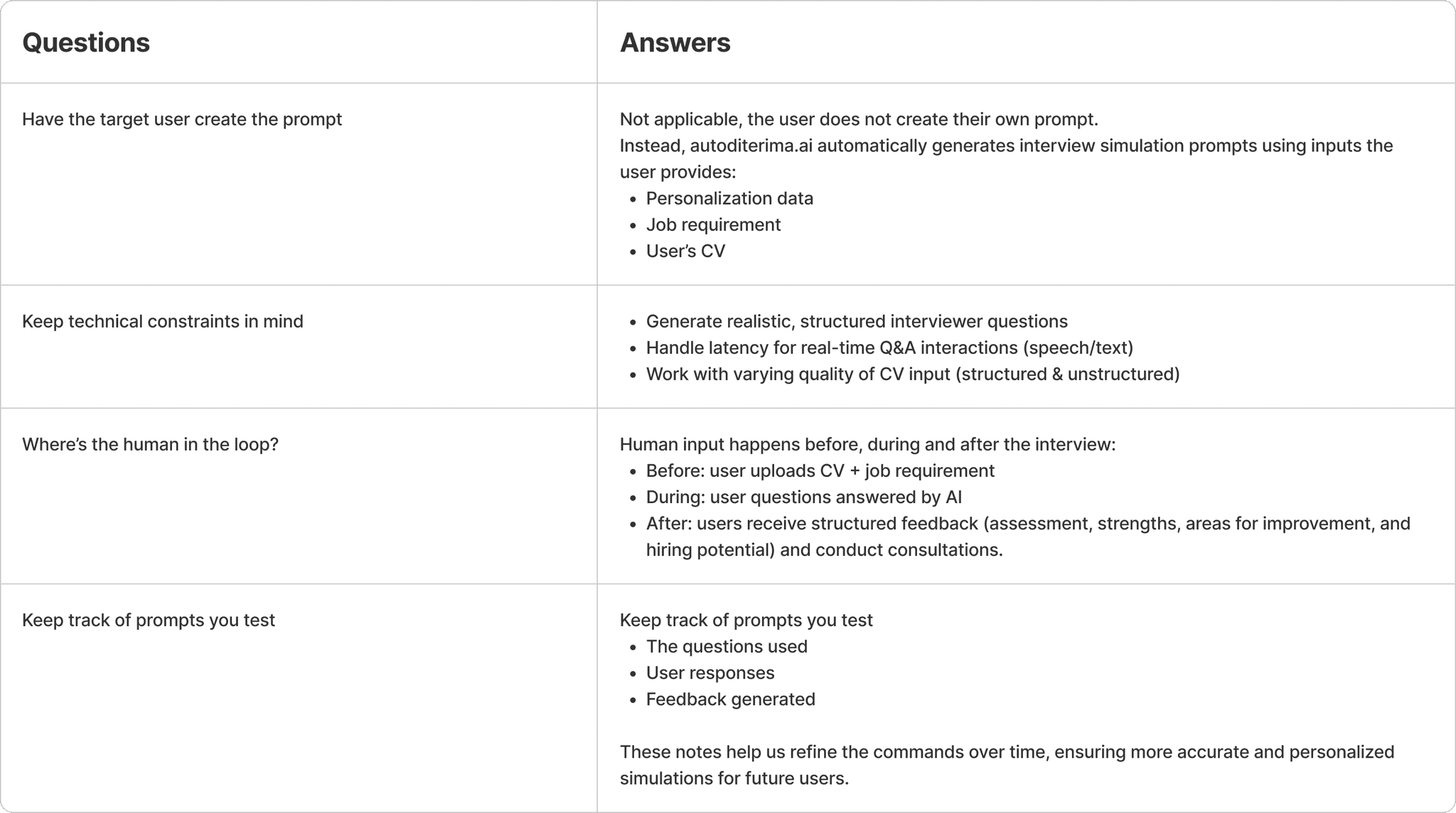

Align the team on AI’s capabilities: what it can and cannot do well.

Defining the Solution

Prompting!

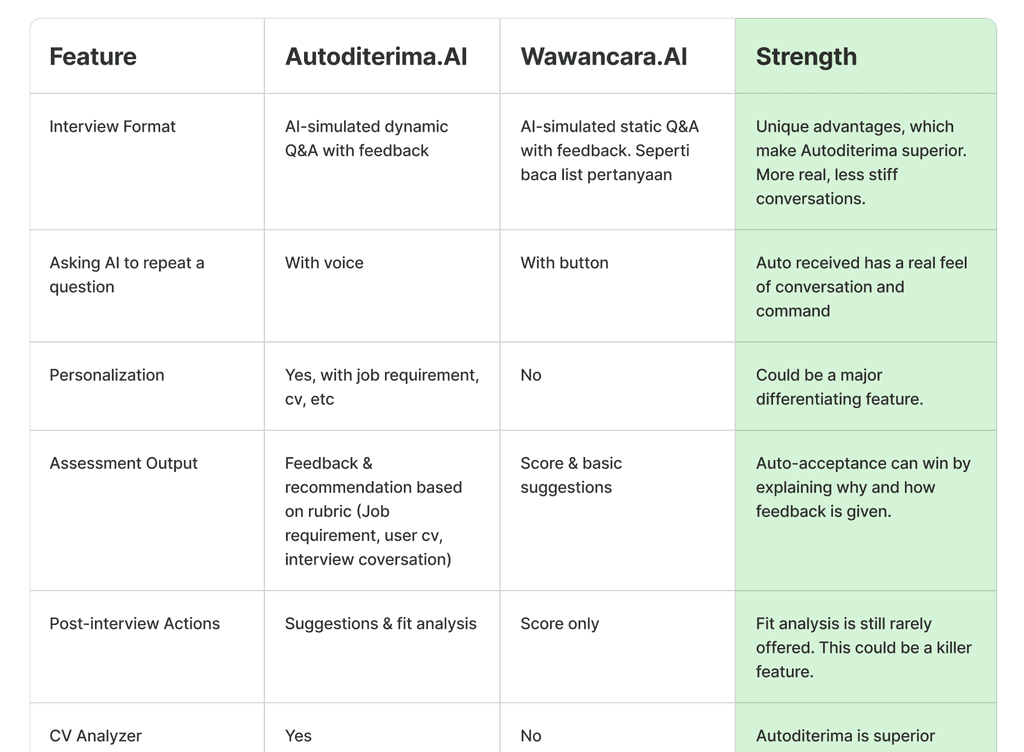

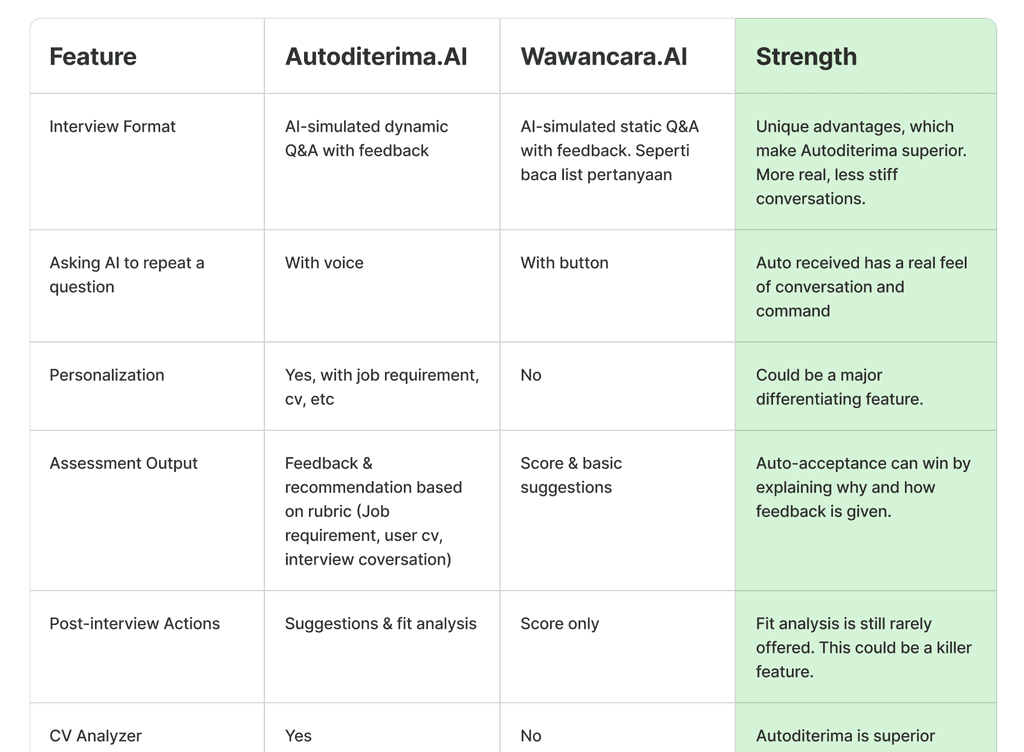

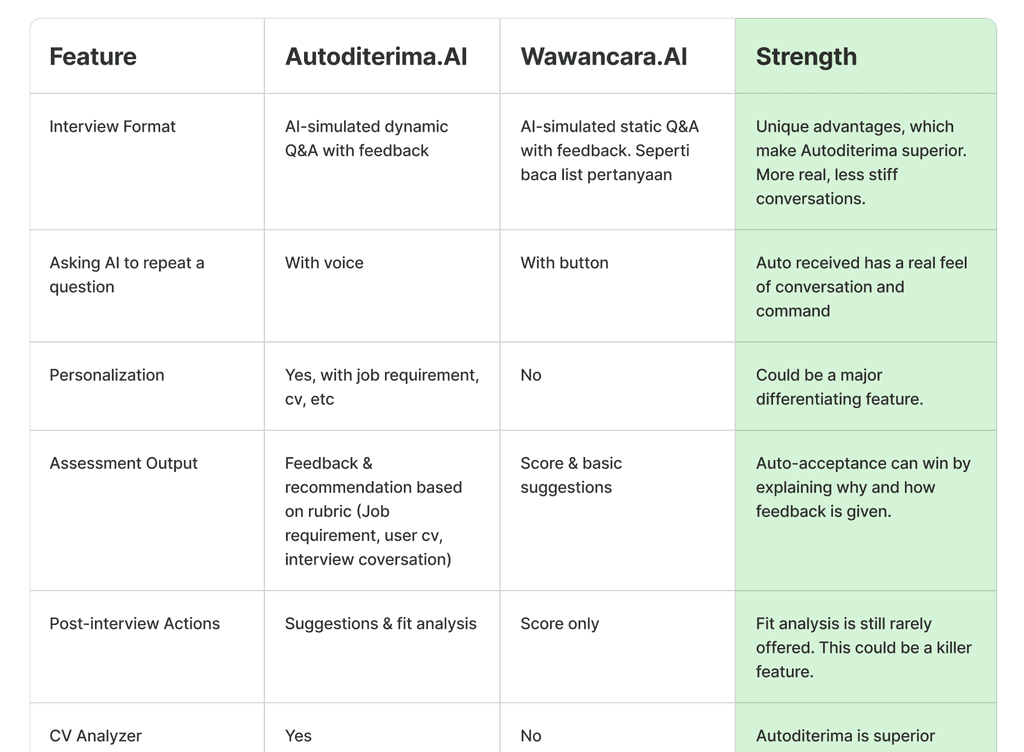

Competitor Analysis

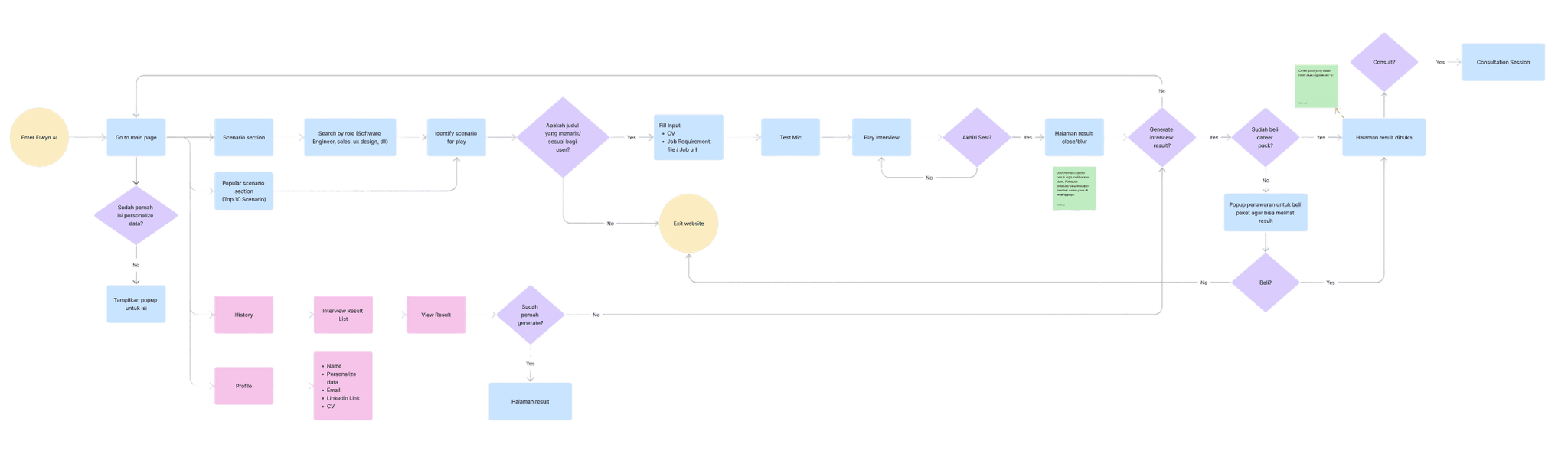

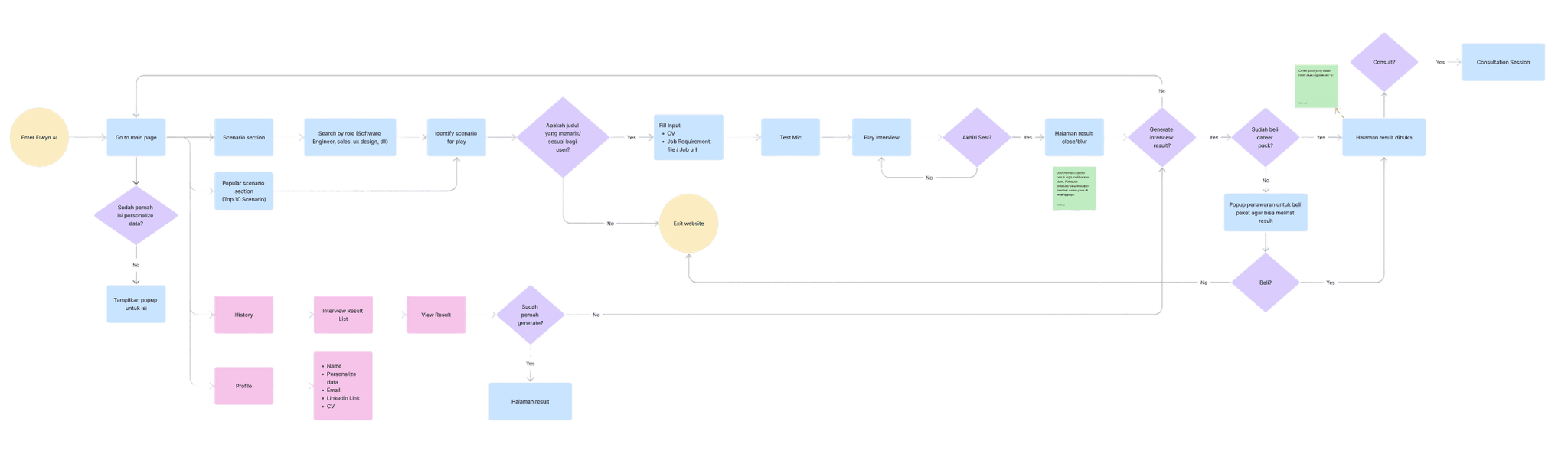

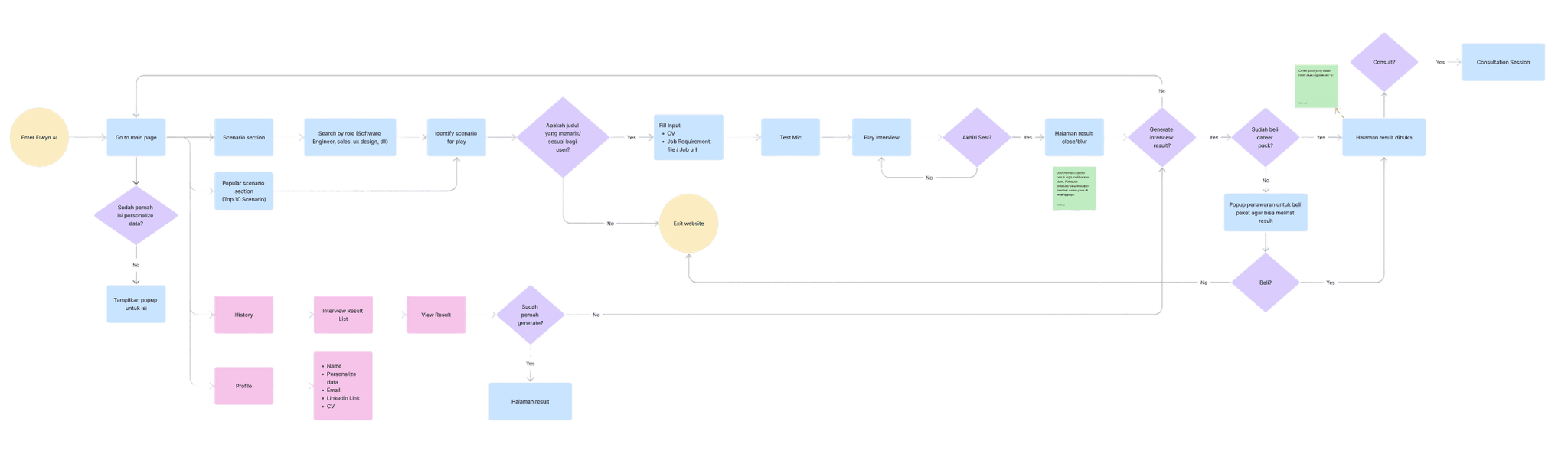

User Flow

Design Deliverable #1

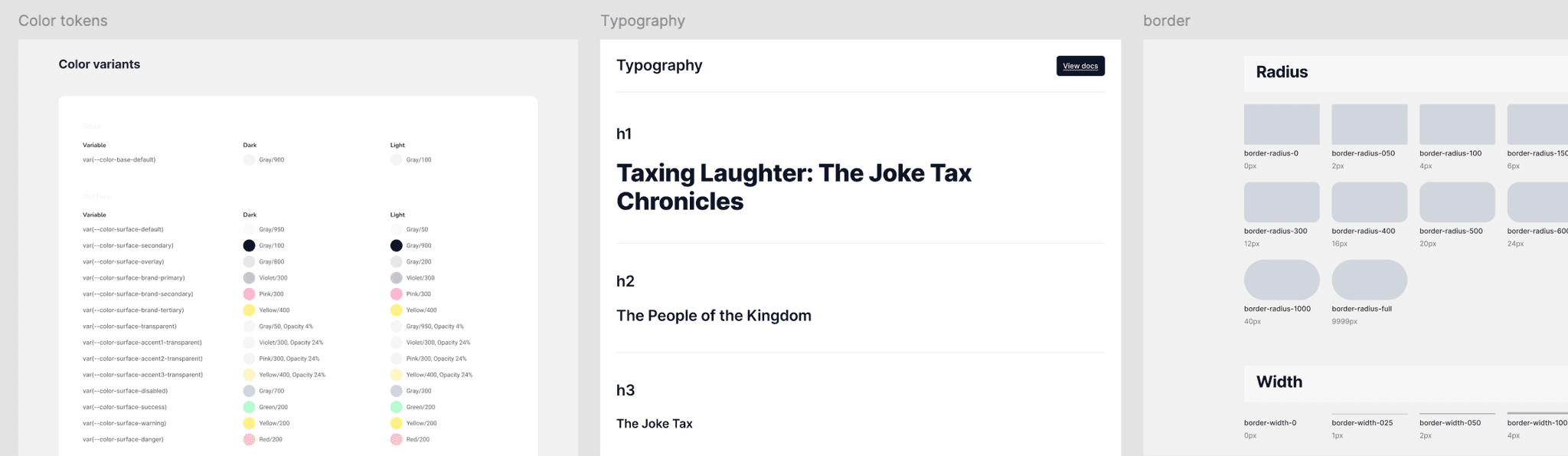

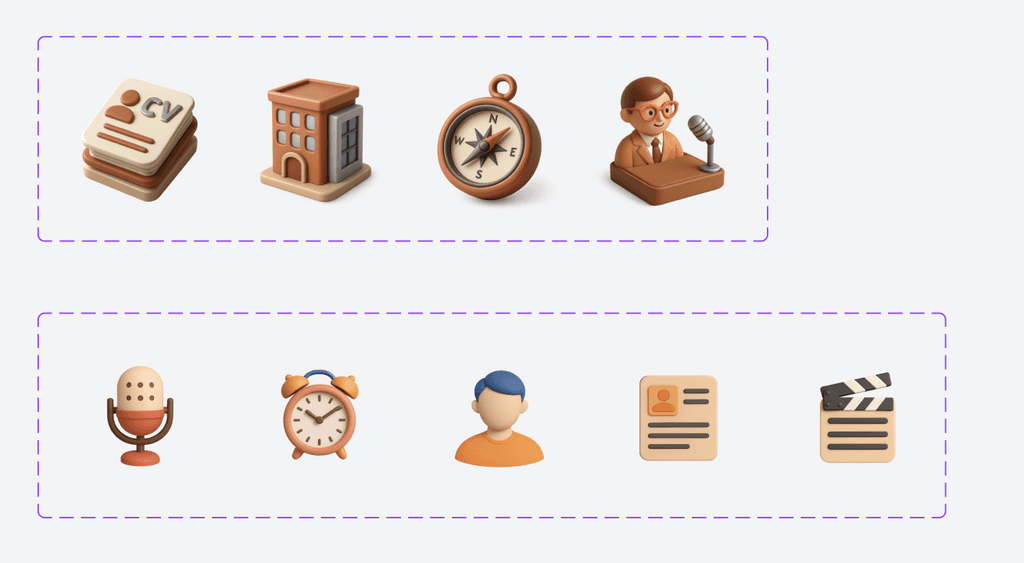

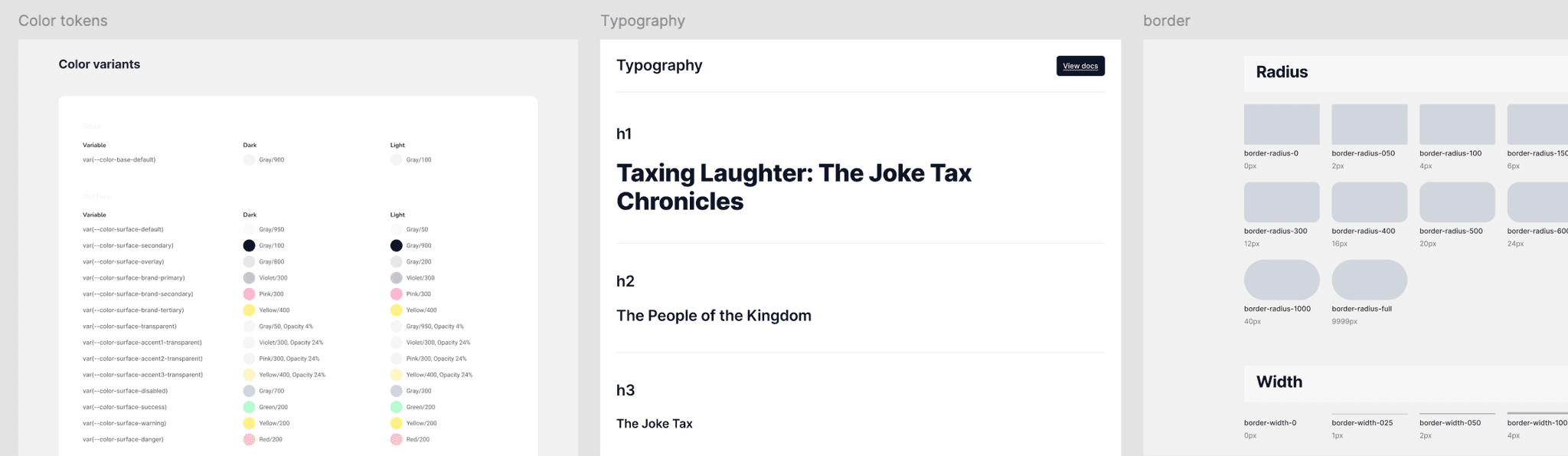

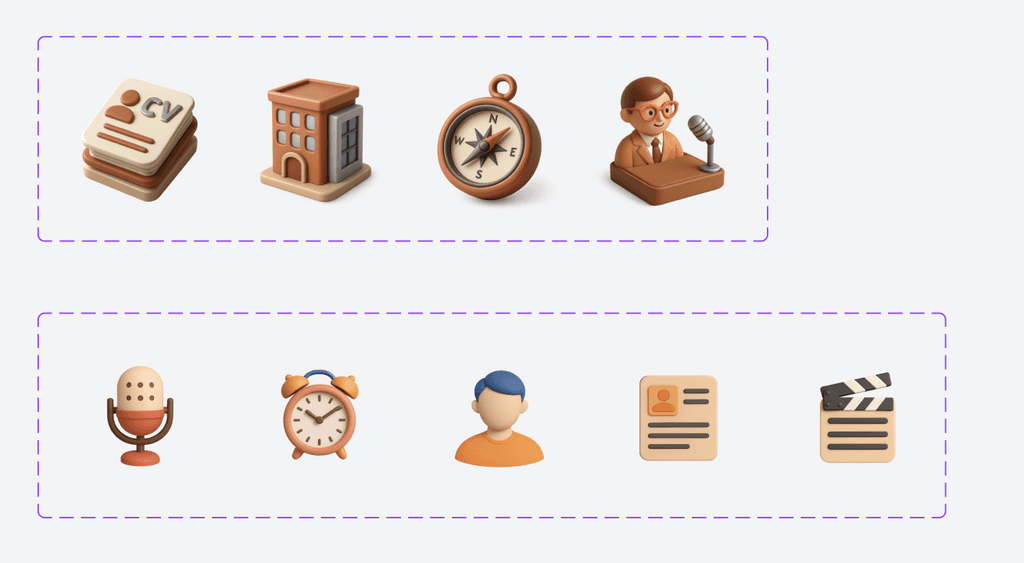

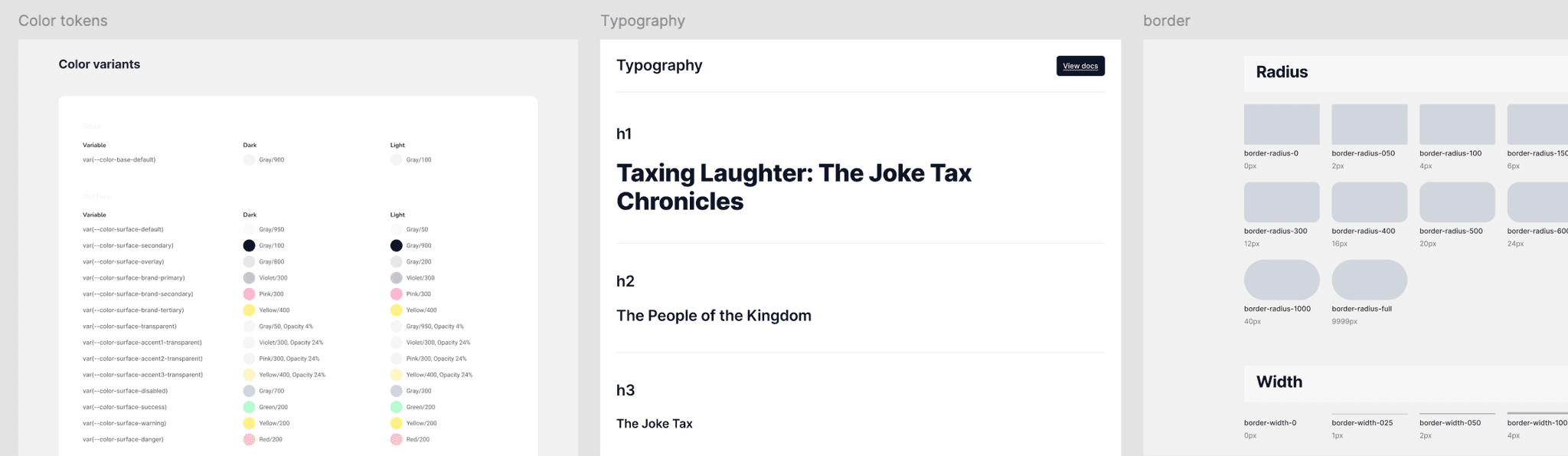

Foundation

Logo

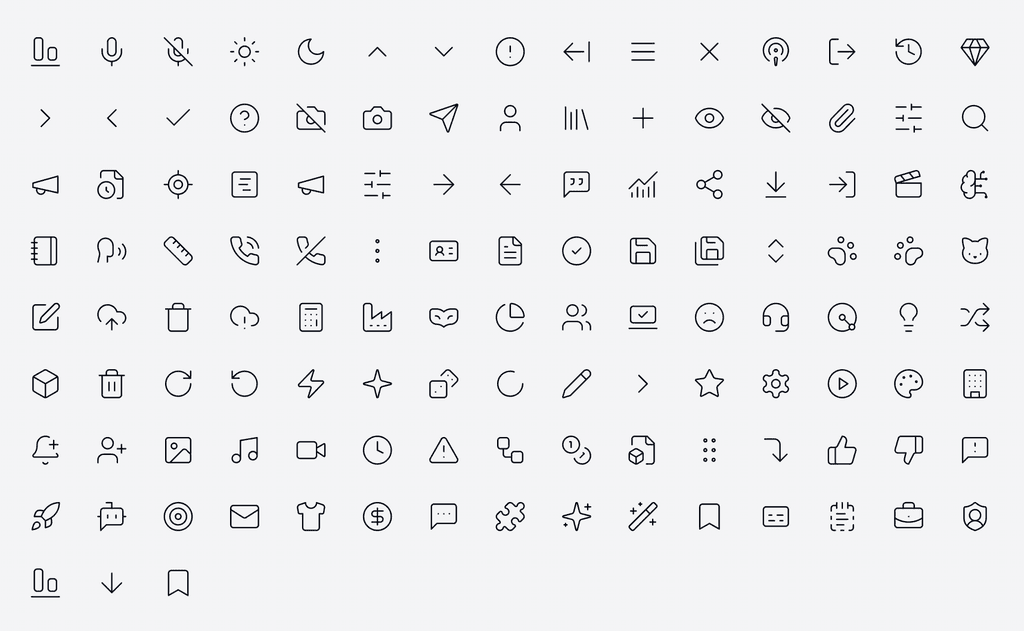

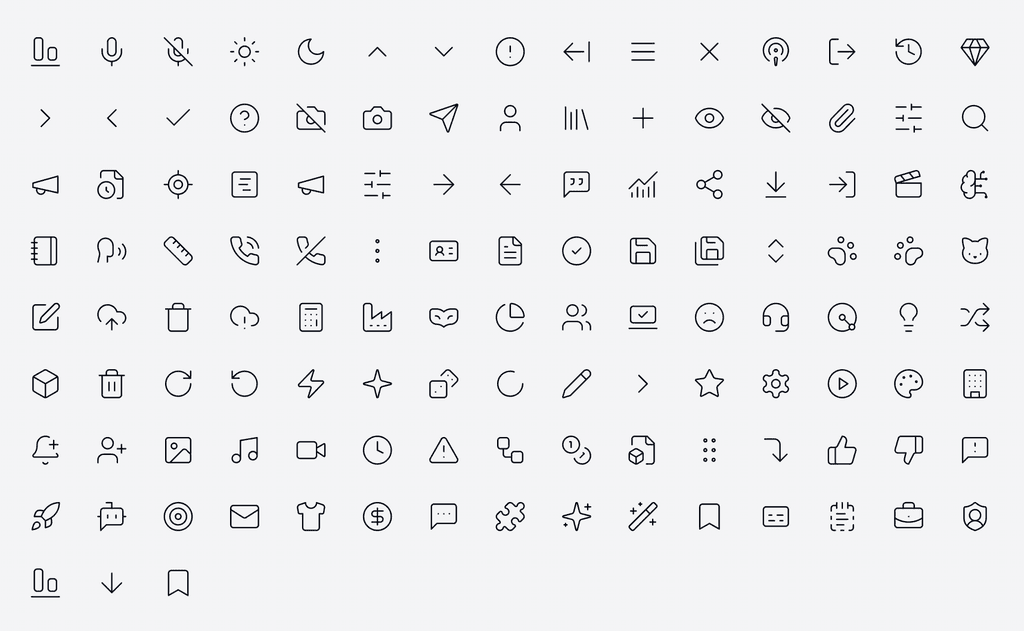

Icon Library

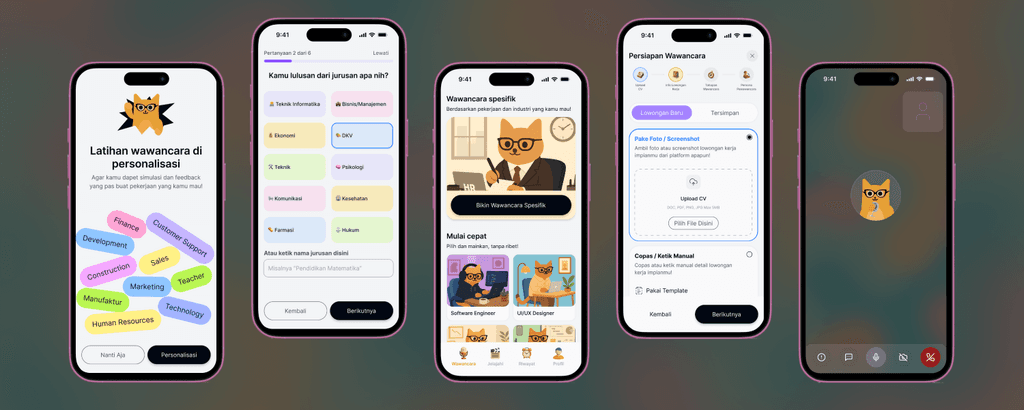

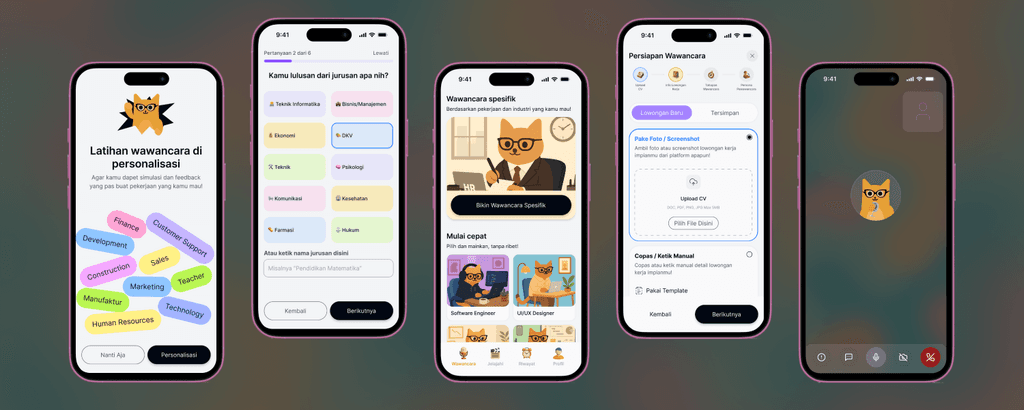

Hi-fi Design

MVP Test

Questions to ask:

Are users really helped by AI interview simulations?

Are users willing to pay?

How easy is it for users to find the interview simulation feature? Do they know how to get started?

To what extent do users trust the evaluation results and improvement suggestions from AI?

Where do users want more transparency in AI reasoning?

What do users do after receiving the results of the AI-generated interview simulation? Do they use it for further preparation? Or something else?

Result

Users who tried the trial were very interested in participating in the interview simulation. Within three months of MPV's launch, the total number of registered users had reached over 2,000+.

Users are willing to pay, but some feel the price is still too high. After three launches, there was a 10% conversion rate.

It's very easy for users to create sessions and interviews, and to run them until the evaluation results are released. Some difficulties only arise when first starting an interview, as users must initiate the conversation. There are 16% dead clicks, and some UI changes need to be made, as well as minor adjustments to the conversation experience.

Users really trust the suggestions and input from AI, because they are accompanied by very logical reasons.

Users want more detailed reasons when their answers are rated wrong.

When receiving a score and evaluation, the user will improve the way they answer, whether by re-simulating or not.

Iterate

On Progress…

AI Product Research

Goals

Understanding the needs and challenges of fresh graduates in facing job interviews

Evaluating the effectiveness of automated feedback in improving user interview readiness

Developing realistic AI-based interview simulations, based on CV and job requirements provided by users

Identifying success metrics to measure user suitability for the intended position

Identify User

Identify User Problem

Job to be done

Practice soft skills to succeed in real-world interactions

Workflow

Search for online soft skills training (YouTube videos, LinkedIn Learning, Udemy, etc).

Enroll in general courses or webinars.

Passively watch video lectures or read materials.

Try to apply skills in real or simulated settings (e.g., mock interviews, mock sales calls).

Seek feedback manually (from peers, mentors, managers).

Repeat practice individually, without structured feedback loops.

Pain Point

Most effort / time / Money spent on

Understand AI’s capabilities as a tool

Align the team on AI’s capabilities: what it can and cannot do well.

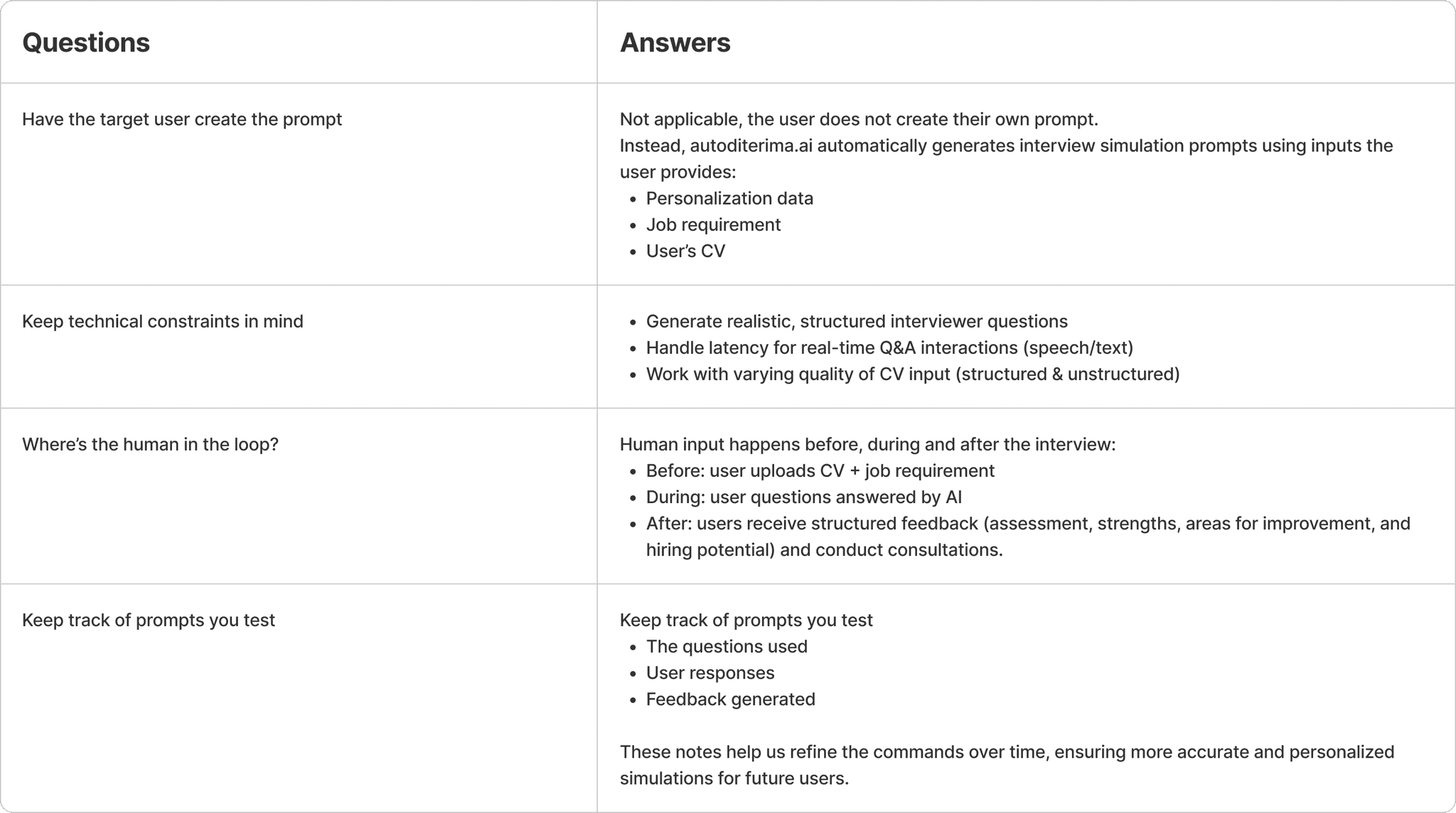

Defining the Solution

Prompting!

Competitor Analysis

User Flow

Design Deliverable #1

Foundation

Logo

Icon Library

Hi-fi Design

MVP Test

Questions to ask:

Are users really helped by AI interview simulations?

Are users willing to pay?

How easy is it for users to find the interview simulation feature? Do they know how to get started?

To what extent do users trust the evaluation results and improvement suggestions from AI?

Where do users want more transparency in AI reasoning?

What do users do after receiving the results of the AI-generated interview simulation? Do they use it for further preparation? Or something else?

Result

Users who tried the trial were very interested in participating in the interview simulation. Within three months of MPV's launch, the total number of registered users had reached over 2,000+.

Users are willing to pay, but some feel the price is still too high. After three launches, there was a 10% conversion rate.

It's very easy for users to create sessions and interviews, and to run them until the evaluation results are released. Some difficulties only arise when first starting an interview, as users must initiate the conversation. There are 16% dead clicks, and some UI changes need to be made, as well as minor adjustments to the conversation experience.

Users really trust the suggestions and input from AI, because they are accompanied by very logical reasons.

Users want more detailed reasons when their answers are rated wrong.

When receiving a score and evaluation, the user will improve the way they answer, whether by re-simulating or not.

Iterate

On Progress…

AI Product Research

Goals

Understanding the needs and challenges of fresh graduates in facing job interviews

Evaluating the effectiveness of automated feedback in improving user interview readiness

Developing realistic AI-based interview simulations, based on CV and job requirements provided by users

Identifying success metrics to measure user suitability for the intended position

Identify User

Identify User Problem

Job to be done

Practice soft skills to succeed in real-world interactions

Workflow

Search for online soft skills training (YouTube videos, LinkedIn Learning, Udemy, etc).

Enroll in general courses or webinars.

Passively watch video lectures or read materials.

Try to apply skills in real or simulated settings (e.g., mock interviews, mock sales calls).

Seek feedback manually (from peers, mentors, managers).

Repeat practice individually, without structured feedback loops.

Pain Point

Most effort / time / Money spent on

Understand AI’s capabilities as a tool

Align the team on AI’s capabilities: what it can and cannot do well.

Defining the Solution

Prompting!

Competitor Analysis

User Flow

Design Deliverable #1

Foundation

Logo

Icon Library

Hi-fi Design

MVP Test

Questions to ask:

Are users really helped by AI interview simulations?

Are users willing to pay?

How easy is it for users to find the interview simulation feature? Do they know how to get started?

To what extent do users trust the evaluation results and improvement suggestions from AI?

Where do users want more transparency in AI reasoning?

What do users do after receiving the results of the AI-generated interview simulation? Do they use it for further preparation? Or something else?

Result

Users who tried the trial were very interested in participating in the interview simulation. Within three months of MPV's launch, the total number of registered users had reached over 2,000+.

Users are willing to pay, but some feel the price is still too high. After three launches, there was a 10% conversion rate.

It's very easy for users to create sessions and interviews, and to run them until the evaluation results are released. Some difficulties only arise when first starting an interview, as users must initiate the conversation. There are 16% dead clicks, and some UI changes need to be made, as well as minor adjustments to the conversation experience.

Users really trust the suggestions and input from AI, because they are accompanied by very logical reasons.

Users want more detailed reasons when their answers are rated wrong.

When receiving a score and evaluation, the user will improve the way they answer, whether by re-simulating or not.

Iterate

On Progress…

©2022 Rahmad